|

Moraga, “The influence of the sigmoid function parameters on the speed of backpropagation learning,” in International Workshop on Artificial Neural Networks, 1995, pp. George, “Design of a neural network based power system stabilizer in reduced order power system,” in Electrical and Computer Engineering (CCECE), 2017 IEEE 30th Canadian Conference on, 2017, pp.

Chi, “Factorized Recurrent Neural Architectures for Longer Range Dependence,” in International Conference on Artificial Intelligence and Statistics, 2018, pp. Huo, “Deep Learning Image Reconstruction Simulation for Electromagnetic Tomography,” IEEE Sens. Haffner, “Gradient-based learning applied to document recognition,” Proc. Hochreiter, “Self-normalizing neural networks,” in Advances in Neural Information Processing Systems, 2017, pp. Gimpel, “Bridging nonlinearities and stochastic regularizers with Gaussian error linear units,” ArXiv Prepr. Sun, “Delving deep into rectifiers: Surpassing human-level performance on imagenet classification,” in Proceedings of the IEEE international conference on computer vision, 2015, pp. Hochreiter, “Fast and accurate deep network learning by exponential linear units (elus),” ArXiv Prepr. Li, “Empirical evaluation of rectified activations in convolutional network,” ArXiv Prepr. Burgard, “Deep feature learning for acoustics-based terrain classification,” in Robotics Research, Springer, 2018, pp. Zisserman, “Microscopy cell counting and detection with fully convolutional regression networks,” Comput. Zhou, “Rectified-Linear-Unit-Based Deep Learning for Biomedical Multi-label Data,” Interdiscip. Zimmer, “Deep learning massively accelerates super-resolution localization microscopy,” Nat. Ng, “Rectifier nonlinearities improve neural network acoustic models,” in Proc. Hinton, “Imagenet classification with deep convolutional neural networks,” in Advances in neural information processing systems, 2012, pp. LeCun, “What is the best multi-stage architecture for object recognition?,” in Computer Vision, 2009 IEEE 12th International Conference on, 2009, pp. Seung, “Digital selection and analogue amplification coexist in a cortex-inspired silicon circuit,” Nature, vol. Although there are other existing activation functions are also evaluated, this study elects ReLU as the baseline activation function. Apart from this, the study also noticed that FTS converges twice as fast as ReLU. As compared with ReLU, FTS (T=-0.20) improves MNIST classification accuracy by 0.13%, 0.70%, 0.67%, 1.07% and 1.15% on wider 5 layers, slimmer 5 layers, 6 layers, 7 layers and 8 layers DFNNs respectively. Based on the experimental results, FTS with a threshold value, T=-0.20 has the best overall performance. For a fair evaluation, all DFNNs are using the same configuration settings. Each activation function is trained using MNIST dataset on five different deep fully connected neural networks (DFNNs) with depth vary from five to eight layers. To verify its performance, this study evaluates FTS with ReLU and several recent activation functions. In this work, an activation function called Flatten-T Swish (FTS) that leverage the benefit of the negative values is proposed. Consequently, the deep neural network has not been benefited from the negative representations. Although ReLU has been popular, however, the hard zero property of the ReLU has heavily hindering the negative values from propagating through the network.

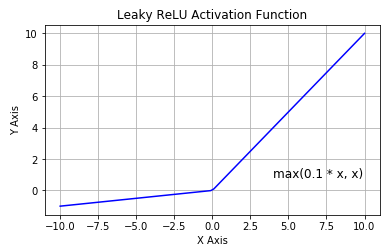

Rectified Linear Unit (ReLU) has been widely used and become the default activation function across the deep learning community since 2012. Activation functions are essential for deep learning methods to learn and perform complex tasks such as image classification.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed